AI has changed our everyday lives, speeding up work and changing how we interact. Yet, with great power comes great risk. GhostGPT is an alarming new shadow version of popular AI, and bad actors are now using it to build phishing attacks, craft malware, and run large-scale cybercrimes. While platforms like ChatGPT impose strong safety barriers, GhostGPT is a no-holds-barred variant made specifically for harm. This article digs into what GhostGPT really is, how it’s being abused, and why cybersecurity experts are sounding the alarm.

What is GhostGPT?

GhostGPT is not a standard product you will find on official websites, nor a program heavily documented on research sites. The term is circulating in security circles and underground chatter to describe a version of open-source language models, such as GPT-J or LLaMA, stripped of all filters and safety nets. The authors of these underground builds copy the architecture of popular AI but remove every ethical or safety guard—allowing the bad prompts to run wild. The upshot? GhostGPT can easily craft a convincing phishing email, generate malware snippets, or deliver social engineering scripts with no pushback.

In short, it is AI with a bad conscience: open-sourced, sometimes re-trained with malicious samples, and circulating freely among criminals

Where Did GhostGPT Come From?

GhostGPT emerged from hidden corners of the internet: shady development groups on the dark web, invite-only Discord and Telegram chat rooms, and obscure coding forums.

Many people think GhostGPT runs on freely available models like GPT-J or LLaMA, which anyone can change. Although these models are meant for research and trial, bad actors can adjust them to lift safeguards. This lets GhostGPT answer questions that normal tools like ChatGPT or Claude would refuse. Its anonymous setup and ability to spread without a central server make it tricky to track or shut down.

How Cybercriminals Use GhostGPT

Cybercriminals are putting GhostGPT to work in several worrying ways:

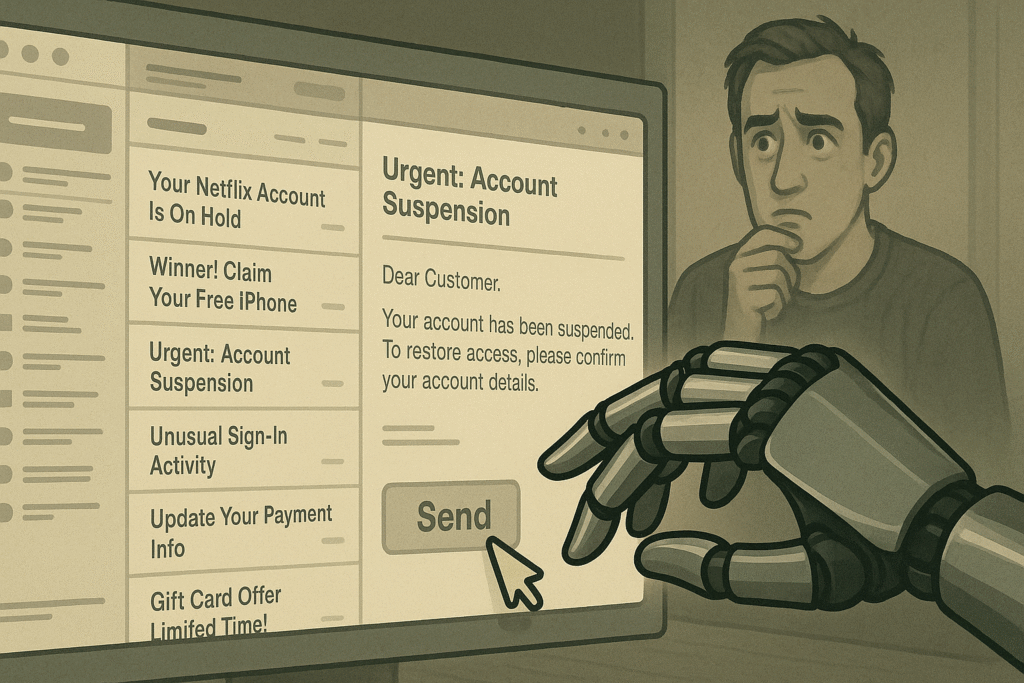

1. Phishing Campaigns

Criminals prompt GhostGPT to write phishing emails that are polished, error-free, and tailored to individual victims. The fake messages pose as banks, tech firms, or government departments and often sail past spam filters.

2. Malware Development

GhostGPT can spit out malware, ransomware, and backdoor payloads. With a series of questions, it assembles a working keylogger or data-stealing script, then helps disguise the code so antivirus tools miss it.

3. Social Engineering

Attackers use GhostGPT to fake text conversations that feel like chatting with a real person. The model can adjust its tone and details on the fly, making it harder for victims to spot a scam. It can pretend to be your colleague, a tech support worker, or a crush, fooling people into giving away passwords or installing malware.

4. Bypassing Security Filters

Because it runs outside monitored APIs and usage rules, GhostGPT can be adjusted to dodge scanners, churn out dangerous text, and copy-paste believable replies that fit neatly into office chat.

Why GhostGPT is Dangerous

GhostGPT hands cybercrime to everyone. You don’t need to know how to code anymore to run a big attack. You just enter a few prompts, and the bot churns out phishing messages, malware, and convincing replies. The attacks get bigger, arrive more often, and leave fewer fingerprints. Regular security tools struggle against it. Unlike the old spam that had typos or the glitchy scripts that burned, this content looks smart and clean, tricking people and scanners at the same time.

Real-World Reports or Cases

GhostGPT hasn’t shown up yet in any public breach report, but more than a few recent incidents line up with how these AI tools work:

- Dark Web Forum Leaks: Cybersecurity experts have spotted hackers trading GhostGPT prompt templates on underground forums. These templates can create phishing emails and Python malware that look and act real in just a few seconds.

- Corporate Phishing Incident: During a cybersecurity conference, professionals discussed a recent attack on a mid-sized U.S. tech company. The HR team received a fake job application that appeared very polished. Later tests showed it was AI-created. The included macro-infected resume installed spyware on the company’s network.

- Proofpoint and Check Point Reports: The two cybersecurity firms have detected more phishing campaigns that bear the hallmarks of AI writing—flawless grammar, custom content for specific regions, and emails that change their responses based on earlier replies. Although evidence doesn’t tie these waves directly to GhostGPT, the patterns line up with its rumored features.

- Hacker Tutorials Using AI: On hidden forums, step-by-step guides show users how to launch malware campaigns or create phishing funnels with unrestricted AI models. Some of these guides mention GhostGPT by name or refer to other versions that have been bypassed for safety locks.

How to Detect and Protect Against AI-Powered Threats

To guard against GhostGPT-style attacks, both individuals and businesses should tighten their defenses:

- Individuals should:

– Question any email that pushes for urgent action, especially if it requests personal or login info.

– Refrain from clicking on unknown links or downloading attachments that appear without a clear purpose.

-Use Strong Passwords and Turn on 2FA

- Organizations should:

– Implement AI tools that spot strange writing and odd user behavior.

– Hold regular training sessions with phishing tests and AI-powered threat awareness.

– Keep software, email gateways, and security products patched and current.

How to Stay Safe from These AI Threats

- Stay informed: Read cybersecurity blogs and follow the latest scams.

- Use browser protection: Use extensions that warn you about scams and dangerous websites.

- Update everything: Upgrade your operating system, browsers, and antivirus as soon as updates arrive.

- Don’t trust appearances: Just because an email looks professional doesn’t make it safe. Be extra cautious if it’s unsolicited or pushes for fast action.

What Cybersecurity Experts Are Saying

Globally, security teams are sounding alarm bells. Norton Labs reports AI-made phishing emails have doubled in twelve months. CrowdStrike researchers warn that AI-assisted attacks are now standard, with tools like GhostGPT speeding up the threat. Experts want governments and AI creators to strengthen protections and fund detection tools for spotting AI-made malicious content. They warn that without regulations, we could soon face cyberattacks so clean we won’t see them coming.

Should Tools Like GhostGPT Be Banned or Regulated?

GhostGPT and similar tools bring up sharp ethical and legal issues. Should we outright ban them? Or is it smarter to create rules to manage their use?

- Arguments for banning: These tools are already being used to create harm; a ban could limit access and empower law enforcement to take quicker action.

- Arguments against banning: Bans often fail, especially with open-source code that can’t be locked down. A simple prohibition may only force users to hide their activity deeper in the shadows.

- Middle-ground solutions:

– Require anyone building advanced language models to secure a license.

– Add digital watermarks to content generated by AI.

– Demand full transparency in how AI models are built and shared.

What Authorities and Platforms Are Doing

Companies like OpenAI, Anthropic, and Meta are rolling out stronger safety checks and usage-tracking systems. Legislators in the EU, the US, and India are writing rules to curb misuse. Cybersecurity agencies are developing models to spot AI threats and are working with ethical hackers to monitor illegal use. Yet enforcement is still a uphill climb. The anonymous, decentralized nature of the platforms that host models like GhostGPT makes oversight tough.

Future of AI in Cybercrime

As AI keeps getting smarter, cybercrime is getting its own upgrade. Experts now talk about an AI arms race, where security teams and attackers keep creating smarter systems to outsmart each other. GhostGPT is only the first wave. Tomorrow’s tools will likely be even stronger, easier to customize, and harder to trace. The problem is bigger than just firewalls and code. It’s an ethical puzzle, a legal tangle, and a social question. We need rules that let innovation keep rolling while making sure it doesn’t roll over us.

Conclusion

GhostGPT is a flashing warning about what can happen when we unleash strong AI and leave it unchecked. Whether it’s crafting believable phishing emails, designing smarter malware, or manipulating minds, tools like GhostGPT expose the shadow side of generative AI. Yet we can still push back. Awareness, better defenses, and caring governance are our shields. The trick is to keep learning, stay cautious, and get involved in making the online world a little safer for everyone.

“When powerful tools fall into the wrong hands, it’s not just data at risk — it’s trust, safety, and the future of the internet.”